April 07, 2021

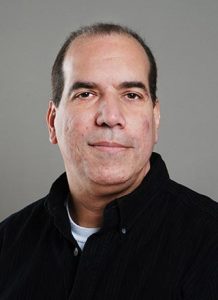

Robots today may look human-like, but it will take a new type of computing for true artificial intelligence to be a reality, Gui DeSouza says.

Robotics have come a long way since Gui DeSouza was defending his dissertation on automated systems for automotive production lines in the 1990s. But he believes it will take a new type of computing before we see the lifelike robots made popular in science fiction.

For National Robotics Week, DeSouza weighed in on the evolution of robotics and what’s in store for the future. DeSouza is an associate professor of electrical engineering and computer science and director of the Vision-Guided and Intelligent Robotics Lab. He is also an associate editor for Transactions on Artificial Intelligence (AI), a journal the Institute of Electrical and Electronics Engineers (IEEE) launched last year.

DeSouza

DeSouza uses robots and robotic vision in a variety of research applications. He also studies autonomous robots and why those systems break down. He’s partnered with the College of Agriculture, Food and Natural Resources to use robotic systems to study the phenotypes and genotypes in crops and cattle and how they affect food production. DeSouza also developed AI systems for diagnostics of power plants, automated assembly lines, early detection of lymphedema, and more recently, study of voice fatigue in student teachers.

But what intrigues DeSouza the most nowadays is why none of those systems — i.e. any smart technology or robot found today — can pass the Turing test. And when they finally do, what will that mean in terms of the Chinese Room Argument? The test, developed by computer scientist and mathematician Alan Turing in the 1950s, is designed to determine whether a machine can trick a person into thinking it’s human. But the second, an even more impactful argument first raised by philosopher John Searle in 1980, relates to whether a computer is aware of what it is doing when it is indeed able to fool people into believing it’s alive.

To DeSouza, that will be the era of true AI.

Quantum Will Be Key

Machine learning, pattern recognition and computer vision — the tools that power today’s autonomous robots — are beautiful systems, DeSouza said. They have already impacted our lives and provided means to solve daily problems. But they represent only a small subset of what AI really entails. In essence, they’re far predecessors to future AI, he said. Think “Neanderthals v. Modern Humans” in the AI evolutionary scale.

“People talk a lot about AI today, but what we are doing is really far from hardcore AI,” he said. “There are systems being developed that try to model cognition, and those systems are impressive. And you can provide them information from books and texts. But right now there are a lot of very simple ways of fooling those systems, ways by which humans wouldn’t be fooled”

Instead of waiting for today’s robots to become more lifelike, DeSouza says to keep an eye on advances in quantum technology. Right now, computers use bits consisting of 0s or 1s to process information. With quantum computing and quantum bits (qubits), algorithms can be exponentially sped up due to quantum principles like superposition and entanglement. That would allow computers to process more data and arrive to more solutions to AI-related problems than currently possible.

“Quantum computing will be key,” DeSouza said. “Quantum computers will make it possible for new and unprecedentedly more complex AI algorithms and approaches to be developed. Advancements in quantum computing will have a huge impact on everything and that’s going to be the next wave of computer science. The future of AI is directly related to the future of quantum computing. If college students want to be part of building this future, they should definitely study either of these areas.”